One port on which the statsd is available for retrieving performance metrics (9125) and the other port (9102) is used for Prometheus to scrape these metrics. I mount the statsd mapper file as ro (Read Only), open 2 ports and configure the statsd-exporter tool to use the mapper file. I use the Docker container for the statsd-exporter, I place the statsd mapper file on /data/nf and start the following command: I use Ansible to deploy this statsd mapper file (And all other monitoring related configuration) to all my Consul servers and then I can filter in Prometheus or other graphing tool like Grafana which metrics belongs to which host. The last “host” label is something I add with Ansible. With this mapping construction, we assign $1 with value b139924a6f44 and $2 with value num_goroutines. The original statsd field that Consul has sent to the statsd-exporter looks like this:Ĭ_goroutines We then create a label named “type” and we assign the value $2. Prometheus doesn’t accept dots in the names, so we have to use underscores for this. The “name” is the name of the metric field in Prometheus, in this case the name is consul_runtime. First asteric can be used as $1, second as $2 etc. You’ll see asteriks, these are wildcards and these can be used as a value by assiging it to a filter. The first line in this mapping construction is the name of the statsd field. On this page I have configured almost all mapping entries: With this file we map statsd fields into fields for prometheus and we can add labels per metric. We need to make sure we have a statd mapper file. We already configured the Consul Servers to send metrics to a statsd server, so we only have to make sure we start one on each host running Consul Server.īefore we start an statsd-exporter, we first have to do some configuration first. Consul doesn’t have an endpoint available to gather these metrics, we have to make use of the “statsd-exporter”. Prometheus will scrape these metrics every 15 seconds (Well, you can configure that) and store them in the database. When you use Prometheus, you’ll use exporters for your applications or databases to expose the metrics for Prometheus.

Once we have configured this on all the Consul Servers, we need to restart them one by one so we keep the Consul Cluster running. The IP Address is from the host itself, and in this case we have to send it to port 9125. In order to make this happen, we have to update our Consul configuration on the Consul Servers to add the following configuration: With this blogpost we will use the “statsd_address” option. You can see some more information about configuring Consul for Telemetry on this page.

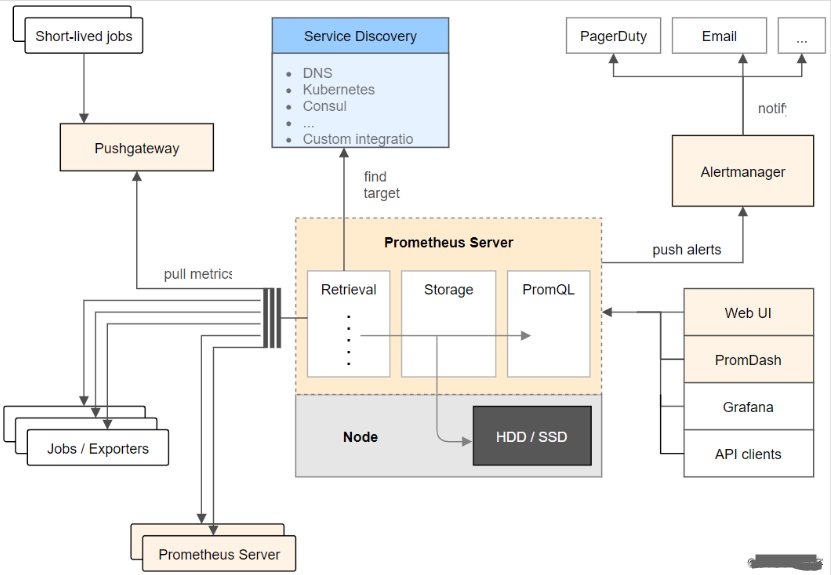

With Telemetry you can configure Consul for sending performance metrics to external tools/applications to monitor the performance of Consul. On this blogpost we will do the following actions:Ĭonsul has a way for exposing metrics, called Telemetry. Please keep in mind this is is just a start and it is incomplete, so if you have suggestions to improve it please let me know. With this blogpost I’ll describe what steps I have taken to monitor Consul. When you look for monitoring Consul in google, you’ll find a lot of pages that shows you that you can use Consul as a monitoring tool but not many on how you can monitor Consul itself. One interesting application to monitor is Consul. When you have a tool like Zabbix or Nagios, you’ll need to write one or multiple scripts to gather all metrics and see how much you can store in your database without loosing performance of your monitoring tool. About the why Prometheus and not doing this with Zabbix or other monitoring tool is an subject for maybe an other blogpost. With Prometheus you can easily gather metrics of applications and/or databases to see the actual performance of the application/database. My choice for using a monitoring tool is currently Prometheus.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed